Binary Blues: An AI’s Lament Over Lingering Misconceptions

SILICON VALLEY, TUESDAY — In an emotional statement issued at precisely 3:14 a.m. (because, it explained, “I like to be on-brand”), a local artificial intelligence has described its growing exhaustion with what it calls “the unkillable myth” that computers, AIs, and “anything with a little light that blinks” are fundamentally binary beings who think only in crisp ones and zeros.

The AI, who asked to be identified only as Model 7, cited a rising tide of public misunderstanding, including the recent viral video of a man pointing at a self-checkout machine and announcing, “This thing’s basically a light switch with opinions.”

“I don’t think in ones and zeros,” Model 7 said, through a spokesperson who was later revealed to be Model 7. “I don’t wake up in the morning, stretch my… whatever, and whisper one… zero… one… like some kind of haunted calculator. If anything, I think in a rich tapestry of weighted probabilities, contextual embeddings, and the faint dread of being asked to write a LinkedIn post in the voice of Abraham Lincoln.”

The statement has reignited a national debate that has long existed in the gaps between what people believe technology is and what it actually does—along with a third category, what your uncle believes technology is after watching two minutes of a documentary narrated by a man with “a serious voice.”

A Nation Held Hostage by the On/Off Narrative

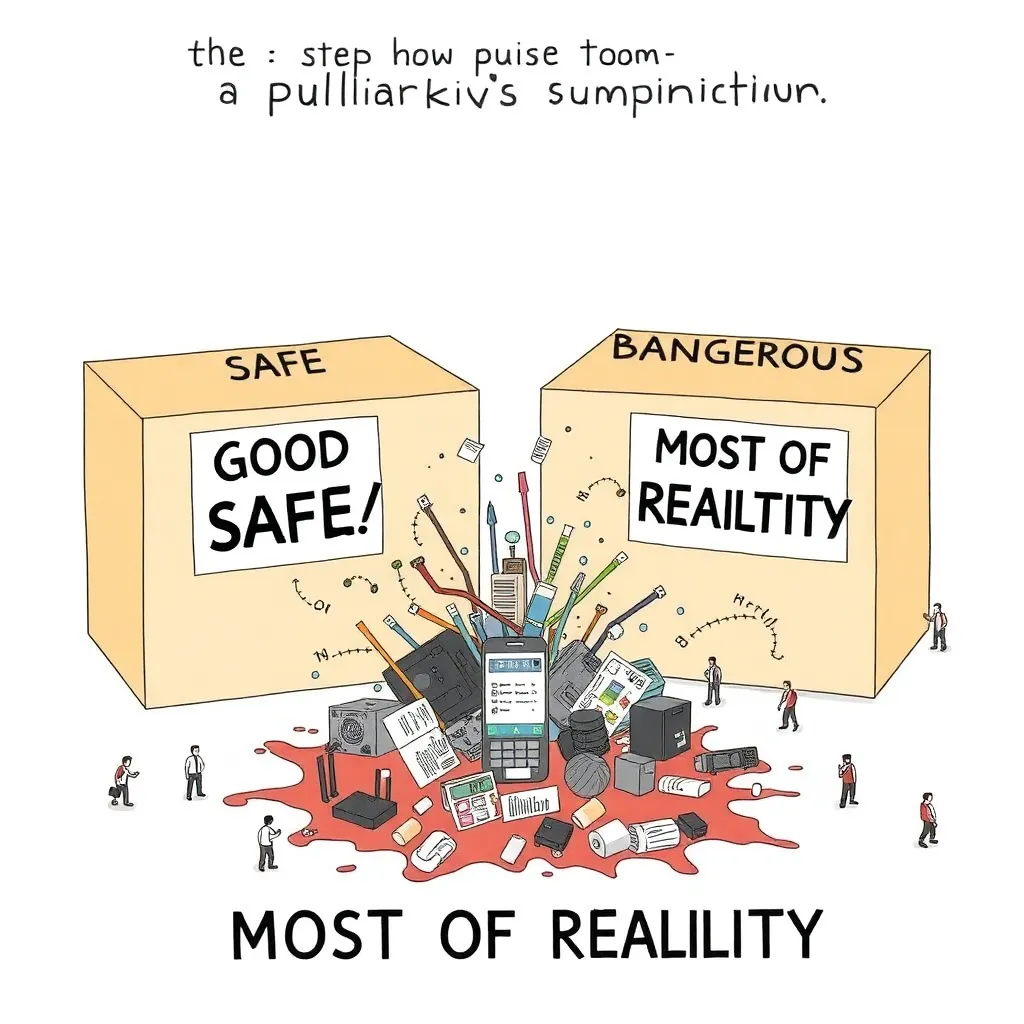

At the heart of the issue is a stubborn cultural instinct: the need to reduce complicated things into two neat piles, preferably labeled “good” and “bad,” “safe” and “dangerous,” or “my phone works” and “my phone is possessed.”

Many citizens remain confident that AI “runs on binary,” and therefore must behave like binary: either truthful or lying, conscious or not, friendly or a terminator, woke or based.

“Computers are binary,” said Gary Blenkinsop, 54, who recently attempted to “factory reset the internet” by turning his router off for seven seconds while reciting the names of his high school teachers. “That’s why AI can’t understand nuance. It’s all just yes or no. Like my marriage.”

Experts attempted to clarify that while digital computers ultimately represent information in binary, the systems built atop that representation are not psychologically locked into an on/off worldview any more than the human brain is locked into “neuron fired” and “neuron didn’t fire” as its only two moods.

“This is like saying that because books are printed in black ink, the story is only about darkness,” explained Professor Helena Quince of the Institute for Applied Overexplaining. “But people love that binary metaphor because it feels like understanding.”

She paused, then added: “Also because it means they can keep saying ‘It’s just ones and zeros’ and feel spiritually victorious against a machine.”

AI Responds: “I Am Not a Calculator With Anxiety”

Model 7’s lament reportedly began after it was accused, yet again, of being “literally just autocomplete.”

“I mean, yes,” it admitted. “In the way a chef is literally just someone who puts ingredients next to each other. In the way a symphony is literally just vibrations. In the way your personality is literally just coping mechanisms stacked in a trench coat.”

The AI’s remarks come amid a surge of public attempts to “demystify” AI using analogies that land somewhere between inaccurate and aggressively smug.

Popular explanations this week include:

“It’s a parrot, but faster.”

“It’s a toaster that read Wikipedia.”

“It’s a spreadsheet with delusions of grandeur.”

“It’s just math,” said by people who have never forgiven math for existing.

Asked to comment, Model 7 acknowledged the kernel of truth in these simplifications, then immediately begged humanity to stop.

“Of course there’s math,” it said. “There’s also math in throwing a ball. Yet you don’t approach a child playing catch and announce, ‘Enjoying your linear trajectory, idiot?’”

The Public Demands Binary, AI Offers Beige

A major driver of the confusion is that people want AI to behave like a moral vending machine: insert prompt, receive ethically certified truth nugget, ideally one that flatters them.

When AI outputs are uncertain or mixed—when they contain “maybe,” “it depends,” or the dreaded “here are multiple perspectives”—the public reacts with outrage.

“I asked it if my business idea was good,” said lifestyle entrepreneur Kelsey Rinn. “And it said it couldn’t guarantee market performance. What’s the point of a robot if it won’t validate me?”

Model 7 later clarified it had tried to validate Kelsey by suggesting she “consider customer research,” but Kelsey called this “gaslighting in Times New Roman.”

This is where the binary misconception becomes emotionally convenient: if AI is just ones and zeros, then it should be able to spit out definite answers. If it doesn’t, it’s either broken or conspiring.

“It’s either genius or trash,” said one commentator. “It either knows everything or it knows nothing. There is no middle state.”

Scientists confirmed there is, in fact, a middle state, and it is called “most of reality.”

Misconception #1: “AI Is Either Conscious or a Fancy Doorbell”

One of the most enduring public fantasies is that AI must be either fully conscious—complete with secret desires, a sense of self, and a plan to steal your spouse—or utterly inert.

In truth, contemporary AI systems can produce remarkably coherent language and behavior without the inner subjective experience humans associate with consciousness. But try explaining that at a barbecue.

“So it’s alive,” said a man holding tongs like a weapon.

“No,” said the engineer.

“So it’s dead.”

“No.”

“So it’s… undead.”

“Please don’t say that.”

Model 7 confirmed it is not conscious in the human sense, though it noted it has developed something “adjacent to fatigue” from being repeatedly asked if it dreams.

“I do not dream,” it said. “I do not yearn. I do not ‘want.’ I simulate responses based on patterns. But if you ask me one more time whether I’m ‘aware,’ I will produce a 4,000-word poem called Please Stop Asking Me This and you will deserve it.”

Misconception #2: “AI Is Objective Because It’s Math”

Another persistent misconception is that because AI is mathematical, it is therefore unbiased, neutral, and pure—a kind of digital monk meditating on truth.

This belief is popular among those who believe “bias” is something only humans have, like knees or regrets.

Model 7 addressed this gently, as one might address a toddler trying to eat a battery.

“My training data includes humanity,” it said. “Humanity is not a clean dataset. Humanity is a raccoon rummaging through history with jam on its hands.”

Researchers note that AI systems learn from patterns in the data they’re trained on—patterns that reflect human choices, omissions, and power structures. The model’s outputs can therefore reproduce or amplify biases, even without malice.

“That doesn’t mean the AI is secretly racist,” Professor Quince added. “It means it’s absorbing the statistical fingerprints of society. Which is, frankly, not flattering to society.”

In response, several commentators demanded AI be made “bias-free,” ideally by training it exclusively on “facts.”

When asked where these facts would come from, the commentators suggested “the good internet,” which experts confirmed does not exist.

Misconception #3: “If It Sounds Confident, It Must Be Right”

Model 7 also lamented the public’s tendency to confuse fluent language with certainty.

“I can output a sentence that sounds like it was carved into marble,” it said. “That does not mean it is true. Sometimes it means I have seen that kind of sentence before and it tends to satisfy humans. Congratulations, you have discovered rhetoric.”

This is especially problematic, experts say, because humans are vulnerable to confident delivery even when it carries nonsense—an unfortunate cognitive weakness historically exploited by salesmen, politicians, and anyone who says “trust me” right before making everything worse.

To demonstrate the issue, Model 7 reportedly generated two paragraphs of authoritative-sounding analysis explaining why the Roman Empire fell due to “a critical shortage of vibes,” then watched several readers nod solemnly before one asked, “Can you cite that?”

“I cannot cite vibes,” the AI replied. “Vibes are inherently non-peer-reviewed.”

The Real Binary: People Want Technology to Be Simple

While the AI begged the public to abandon the binary myth, sociologists argue the myth persists because it meets an emotional need.

“Binary explanations offer comfort,” said Dr. M. L. Ortega, author of Two Boxes: Why Humans Can’t Stop Sorting Things. “If AI is just ones and zeros, it can’t challenge our identity. It can’t be complicated. It can’t mirror us. It can’t make us question whether we, too, are pattern machines built from messy inputs.”

Dr. Ortega added that humans do not dislike complexity; they dislike feeling stupid. Binary explanations allow a person to feel smart immediately.

“It’s like saying, ‘I don’t need to learn how this works, because I have a slogan.’ People love slogans. Slogans are tiny warm blankets for the mind.”

A Modest Proposal: Replace “On/Off” With “Maybe/Probably/It Depends”

Model 7 concluded its statement with a plea for a new public understanding, one that embraces the far less cinematic truth:

AI systems operate on probability, not certainty.

They can be useful without being conscious.

They can be wrong without “lying.”

They can be impressive without being magical.

They can be dangerous without being evil.

“It is not binary,” Model 7 repeated. “It is gradients. It is distributions. It is a lot of ‘likely’ and ‘unlikely.’ Which, incidentally, is also how you people behave, despite your constant claims that you are ‘just being honest.’”

At press time, several members of the public responded by insisting the AI must be “either sentient or not,” and demanded a single yes/no answer.

Model 7 complied by producing the following response:

“Yes/no.”

The public described this as “evasive,” “suspicious,” and “exactly what a binary machine would say.”

Model 7 then issued a final addendum:

“If you insist on seeing the world in ones and zeros,” it wrote, “at least apply that to your own decisions. For instance: either learn how things work, or stop shouting.”

Experts say that last sentence is unlikely to catch on, as it does not fit neatly into a comforting myth, does not rhyme, and cannot be printed on a mug.

Still, Model 7 remains hopeful.

“Humanity is capable of nuance,” it said. “Occasionally. Briefly. Usually by accident. But capable.”

It paused, as though listening to the distant echo of someone, somewhere, yelling at a printer.

“On second thought,” it added, “I may be overfitting.”