Entropy: The Official Unit of “Mmm, Could Be Anything”

By unanimous agreement of physicists, philosophers, people staring into half-organized junk drawers, and one raccoon with a municipal engineering degree, entropy is best understood as a measure of how confidently the universe shrugs.

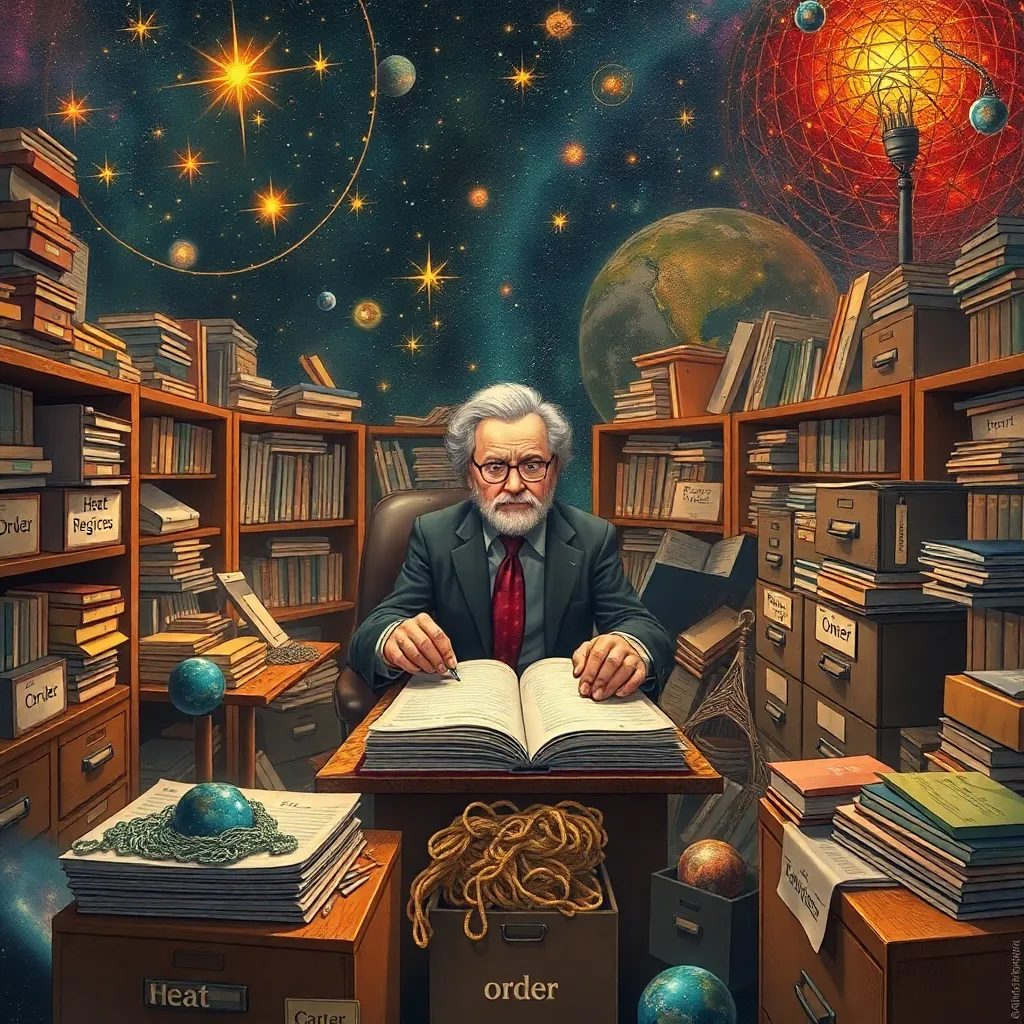

At first glance, entropy appears to be a respectable scientific concept. It wears a tie, carries equations, and stands near chalkboards looking offended by your thermostat. In public, it is introduced as a measure of disorder, energy dispersal, or the number of microscopic arrangements compatible with a macroscopic state. This is all very elegant and has been ruinous for dinner parties.

But in practice, entropy is also the cosmic bookkeeping entry for “we know the soup is warm, but not where each noodle has gone.”

Consider the classic example: a neat ice cube dropped into a glass of water. The cube melts. It does not, under ordinary circumstances, leap out of the drink at 3:14 p.m., reassemble itself into a crisp geometric celebrity, and issue a statement blaming the media. Why? Because there are vastly more ways for the molecules to be arranged in the melted, mixed-up situation than in the tidy, cube-based regime of the past. Entropy, then, is partly a measure of how many possible hidden little stories can lurk beneath the broad headline: glass of water, no miracles observed.

This has led some citizens to conclude that entropy is simply ignorance dressed up in mathematics. “If I knew where every molecule was,” says the confident uncle at the barbecue, “there’d be no entropy for me.” This is the sort of sentence that causes nearby physicists to silently rotate away like sunflowers avoiding a lunar eclipse.

He is not entirely wrong, which is irritating. In statistical mechanics, entropy does indeed depend on how much detail is being tracked. If you describe a gas only by temperature, pressure, and volume, then countless microscopic arrangements fit that summary. Entropy counts that generosity. It is, in part, a measure of missing information about the precise microstate. The gas is not confused. You are.

And yet the gas is also, in its own way, committed to the bit. Even if some supreme being with a clipboard knew every molecular position and momentum, the large-scale tendency toward higher entropy would remain the law of the land. This is because the universe contains an overwhelming abundance of messy-looking macrostates compared with neat-looking ones. Order is not banned. It is merely outnumbered, like a man trying to organize a stadium full of bees using a polite cough.

So is entropy a measure of how little we really know? Yes, but not in the moody café way people mean when they say it while looking at rain on a window. It is not a universal index of sadness, confusion, or your inability to understand tax forms. It is specifically a measure tied to the number of microscopic possibilities consistent with what is known at a coarser level.

In information theory, this relationship becomes even less shy. There, entropy is a direct measure of uncertainty: how surprised you should expect to be by the next message. A predictable source has low entropy. A random source has high entropy. A text message reading “On my way” has moderate entropy. One reading “The refrigerator has unionized and demands curtains” has extremely high entropy, though in some neighborhoods it is no longer considered unexpected.

This is why entropy has become the patron saint of every field in which reality refuses to sit still. Physics uses it to discuss heat and irreversibility. Information theory uses it to talk about uncertainty and compression. Black hole physics uses it to announce, with astonishing calm, that even the universe’s most dramatic voids apparently have bookkeeping rules. Everywhere entropy goes, it carries the same basic message: there are many ways for things to be unhelpfully unspecified.

There is, however, one crucial difference between “we don’t know” and entropy in the scientific sense. Ordinary ignorance is cheap. You can be ignorant of anything. The capital of Mongolia. The proper way to fold a fitted sheet. Why your left sock keeps migrating behind the dryer. Entropy is disciplined ignorance. It is quantified ignorance. It is ignorance that has been forced to attend conferences and wear a lanyard.

That is why the answer must be delivered with care. Entropy is not just how little we know. It is how many fine-grained possibilities remain once we specify the larger picture. It measures the hidden multiplicity underneath the visible summary. If all you know is that a room is warm, there are innumerable molecular ways to achieve that warmth. Entropy counts the crowd lurking behind the temperature reading like a flash mob of invisible billiard balls.

The deeper scandal is that the universe itself seems to prefer these crowded possibilities. Left to itself, it drifts toward states that can happen in more ways. Not because it is lazy, exactly, but because probability is a ruthless administrator. The highly ordered state is a tiny VIP section; the disorderly state is the rest of the arena, the parking lot, and several neighboring counties.

So yes: entropy is, in an important sense, a measure of how little we really know. But it is also a measure of how much there is not to know individually because the microscopic options are so extravagantly numerous. It is ignorance with structure, uncertainty with statistics, mystery with a calculator.

In summary, entropy is what happens when reality says, “I could explain every detail, but frankly there are 10^23 of them and lunch is in five minutes.”