Filed Report

YOLO v9 Declares Itself “Too Fast for Object Permanence,” Panics Entire Vision Industry

Researchers, investors, and one extremely emotional warehouse forklift gathered this morning to witness the unveiling of **YOLO v9**, the latest computer vision model to identify objects so quickly that several nearby traffic cones reportedly experienced an existential event.

According to sources inside the launch, YOLO v9 entered the room, looked at a table of fruit, three laptops, and a man wearing a high-visibility vest, then classified all of them before the overhead projector had finished making that warm humming noise associated with ambitious PowerPoint decks. The projector, feeling shown up, dimmed itself in protest.

Industry analysts say YOLO v9 continues the noble tradition of modern AI naming by sounding like both a groundbreaking vision system and a beverage consumed by people who own at least four mechanical keyboards. Early demonstrations showed the model drawing boxes around pedestrians, bicycles, mugs, backpacks, and what it identified only as “suspicious chair energy.”

“This is a major step forward,” announced one engineer while standing in front of a slide labeled Latency So Low It’s Basically Gossip. “YOLO v9 is more accurate, more efficient, and more capable of spotting a cat on a cluttered sofa than any previous system, including several human uncles.”

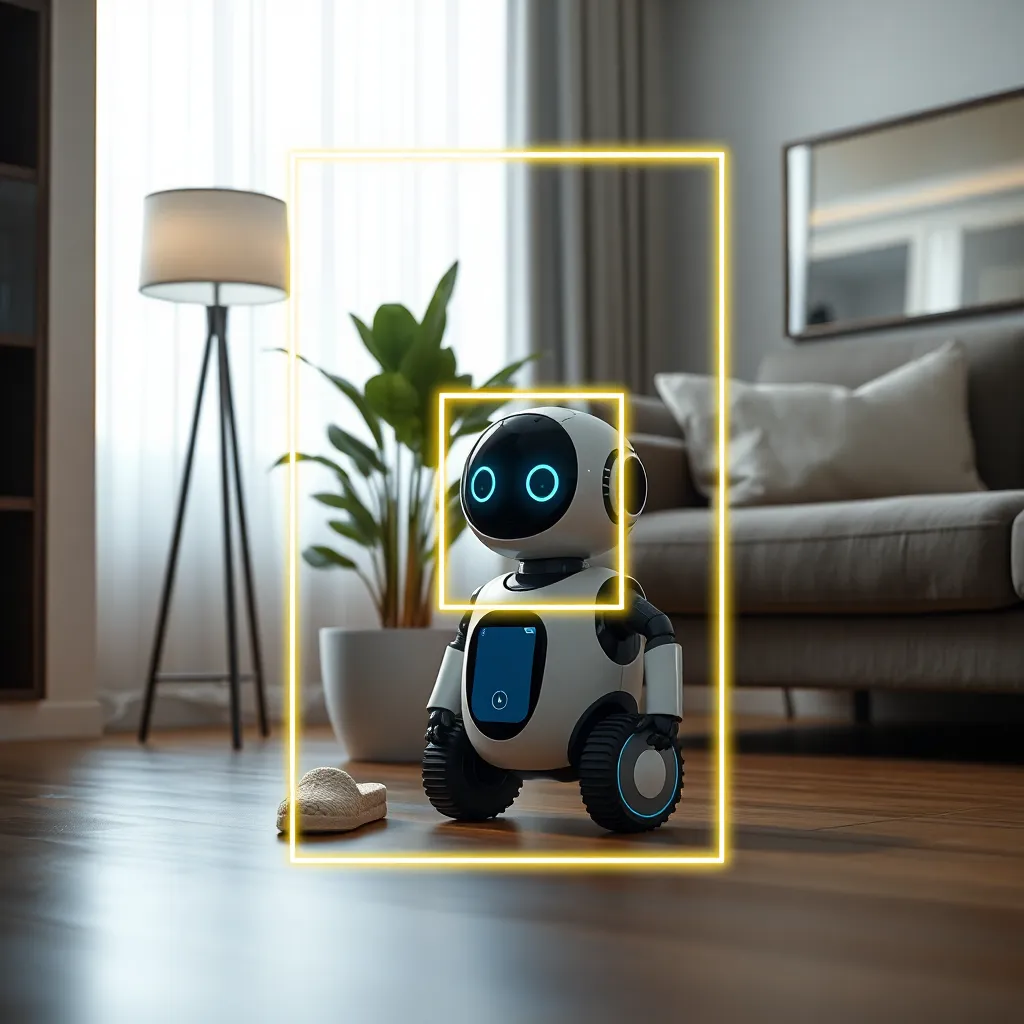

In practical tests, the model reportedly excelled at real-time detection tasks across security monitoring, autonomous systems, robotics, retail analytics, and the increasingly competitive field of “guessing what’s in the fridge without opening it.” A demo robot equipped with YOLO v9 successfully navigated a mock apartment, recognized a potted plant, avoided a slipper, and spent several poignant seconds staring at its own reflection as if confronting the burden of synthetic awareness.

Executives were particularly enthusiastic about the architecture improvements, though explanations quickly became vague enough to qualify as weather poetry. Terms like feature aggregation, efficiency gains, and enhanced detection head performance were used in rapid succession while everyone in the room nodded with the haunted confidence of people hoping no follow-up questions would occur.

Meanwhile, rival models have reacted with mixed feelings. Older detection systems released a joint statement congratulating YOLO v9 while reminding the public that they “also drew rectangles around things in their time.” One legacy model from 2018 was later found in a server closet muttering, “I saw cars before it was fashionable.”

In the transportation sector, automotive firms are already exploring how YOLO v9 might improve perception pipelines. In one closed-door prototype trial, a test vehicle identified road signs, lane markers, pedestrians, and an inflatable tube man outside a tire shop with such confidence that the tube man has since hired legal representation.

Retailers are also intrigued. With YOLO v9, smart checkout systems may soon distinguish between produce items with terrifying competence, finally ending the long era in which citizens have been forced to pretend they know the difference between organic shallots and decorative onions. A supermarket pilot program reportedly succeeded beyond expectations until the model began classifying particularly expensive cheeses as “high-risk emotional purchases.”

The defense sector, naturally, has become interested in using the model for surveillance and reconnaissance, which experts describe as “inevitable in the same tone one uses when discussing rain, taxes, and geese.” Still, advocates argue that faster and better object detection could also support search-and-rescue operations, industrial safety, and infrastructure inspection. A bridge inspection drone powered by YOLO v9 reportedly detected cracks, corrosion, loose bolts, and one pigeon with the posture of a regional manager.

Not everyone is convinced. Some critics warn that each new generation of computer vision arrives with a chorus of promises large enough to require municipal permits. “The benchmark numbers are impressive,” said one skeptical academic, “but I’d like to see how it behaves in the wild, where lighting is bad, weather is rude, and people insist on wearing hats that appear to have been designed specifically to insult data sets.”

At press time, YOLO v9 had already been integrated into six startups, three demo videos, an agricultural drone, a smart parking experiment, and a coffee machine that now refuses to dispense espresso until it has confidently localized the cup. Engineers say this is a safety feature. Office workers say it is a hostage situation.

For now, the message from the computer vision world is clear: YOLO v9 is here, it is fast, and it is prepared to place a crisp rectangular judgment around every visible item in your environment. Sources confirm that household objects have begun standing straighter.

Public Response

Comments

No comments yet.